AI-generated, human-reviewed.

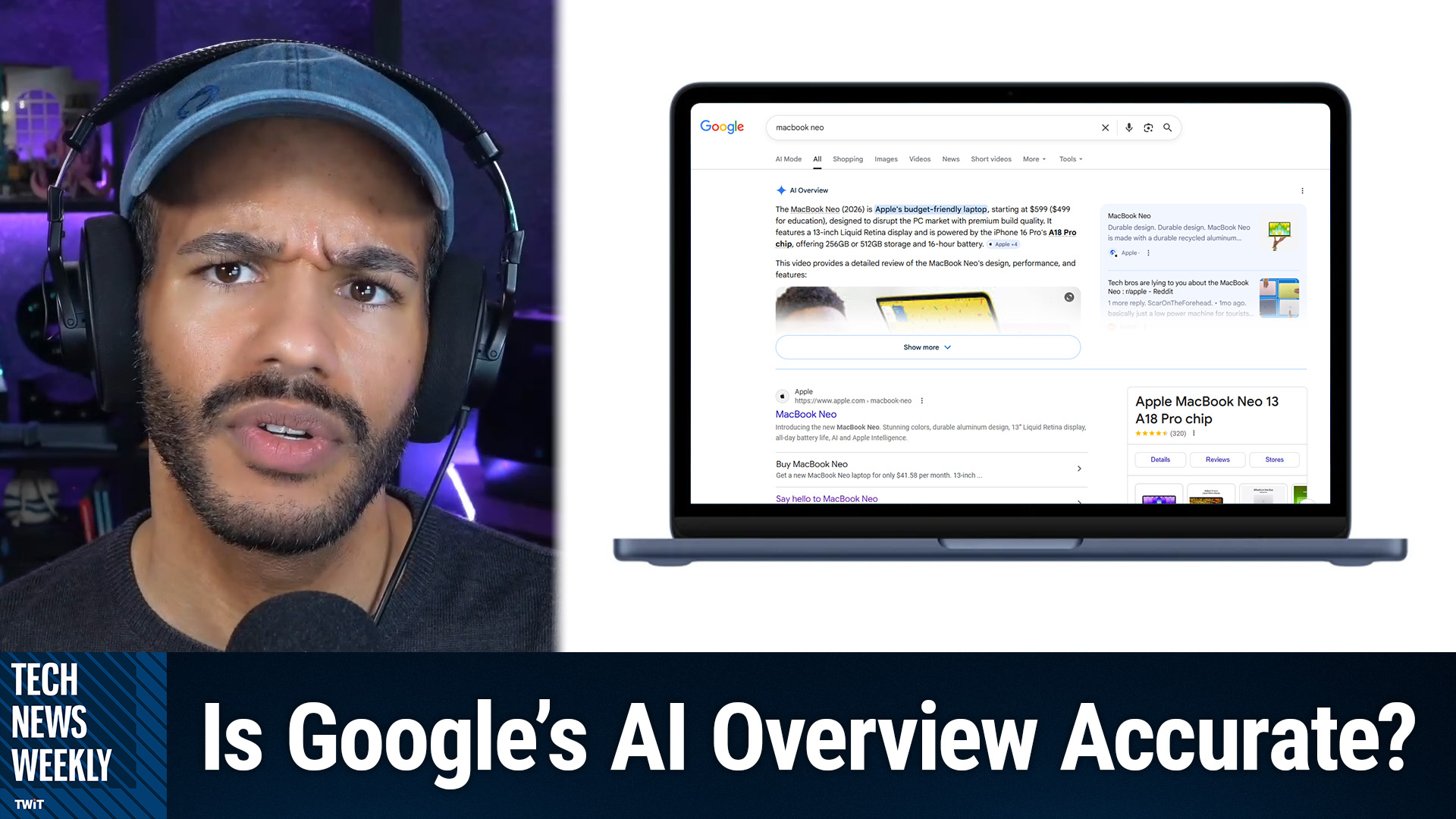

Google's AI-generated search overviews might seem like reliable shortcuts, but even their "high" accuracy rates can produce millions of misleading or incorrect answers every hour. On Tech News Weekly, Mikah Sargent and Amanda Silberling break down why these search results frequently fail—and what this means for everyday users and journalists.

How Reliable Are Google's AI-Powered Search Overviews?

Tech News Weekly covered a new New York Times investigation revealing that Google's AI overviews, the summaries found atop many search results, are correct about 91% of the time. That might sound impressive, but the remaining 9% error rate—across Google's 5 trillion annual searches—results in tens of millions of mistakes every hour. According to Mikah Sargent, even small percentages become massive when scaled to such usage.

Google's AI overviews are powered by large language models (LLMs) like Gemini. These models generate answers based on probabilities learned from huge amounts of data, not fixed databases or explicitly programmed facts. This fundamental design means even the best AI will sometimes make mistakes, as it's "guessing" what answer fits best, rather than citing genuine authorities.

The Hidden Flaw: Ungrounded Sourcing in AI Answers

A major concern discussed on Tech News Weekly is the concept of "ungrounded" answers. According to Mikah Sargent, these are responses where the AI provides the right answer, but links to sources that do not actually back up its claims. For example, the system might state a scientific fact and reference a reputable website—but the linked page never contains the asserted information.

After Google's Gemini 3 launch, more than half of the AI's so-called "correct" answers cited sources that didn't actually corroborate the AI's conclusions. Amanda Silberling emphasized that this matters because people trust answers more when they see source links, often without double-checking them.

Why Do Small Error Rates Matter Given Massive Search Volumes?

With billions using Google each day, a 9% inaccuracy rate means millions are exposed to incorrect or misleading info every hour. Amanda Silberling pointed out that even if error rates seem trivial (like 0.1% of ChatGPT conversations mentioning suicide), scaled up, they affect millions.

For consumers searching about anything—from health advice to breaking news—these compounded errors can cause confusion or even harm. For journalists and researchers, double-verifying every AI overview is critical, since mistakes and faulty sourcing remain common.

How Are Regular Users Responding?

Many tech-savvy listeners and journalists scroll past the AI overviews, preferring traditional search links to assess information credibility. But as Mikah Sargent noted, less-experienced users—or those outside the "tech bubble"—tend to trust these summaries at face value, especially given their prime spot atop search pages.

Google does show a disclaimer ("AI can make mistakes, so double check responses"), but its placement at the top of results still sends a message of trustworthiness, which is misleading for such imperfect technology.

Why Google Deploys Imperfect AI Results Anyway

Tech News Weekly highlighted that despite known accuracy and sourcing flaws, Google continues rolling out AI overviews for its competitive advantage, financial interests, and to keep pace with industry trends. Amanda Silberling joked that explicit warnings about unreliability wouldn't serve shareholder value—a reminder that business interests may override user safety when deploying new technology.

What You Need to Know

- Google's AI overviews are "correct" about 91% of the time, but that leaves millions of wrong answers every hour.

- Many "correct" answers cite sources that don't actually contain the information claimed—making them ungrounded.

- The underlying AI is designed to guess answers from data, not to strictly follow factual rules—errors are inevitable.

- Users often trust AI summaries due to their prominent placement and cited sources, even when these references are inaccurate.

- Both everyday internet users and journalists should cross-check AI-generated answers before trusting them.

- The vast scale of Google search means even "minor" error rates impact millions within minutes.

- Google's prioritization of AI overviews reflects business incentives more than guaranteed accuracy.

The Bottom Line

On Tech News Weekly, Mikah Sargent and Amanda Silberling explained why Google's AI overviews are less trustworthy than they appear—and why their error rates, when multiplied by Google's enormous reach, can mislead or confuse millions. Until AI accuracy and source-grounding improves substantially, users and journalists alike should approach these search summaries with skepticism and always double-check their sources.

Want more expert analysis? Subscribe and never miss an episode:

https://twit.tv/shows/tech-news-weekly/episodes/432